Bhishma, Nuremberg, and When Humans Stop Thinking

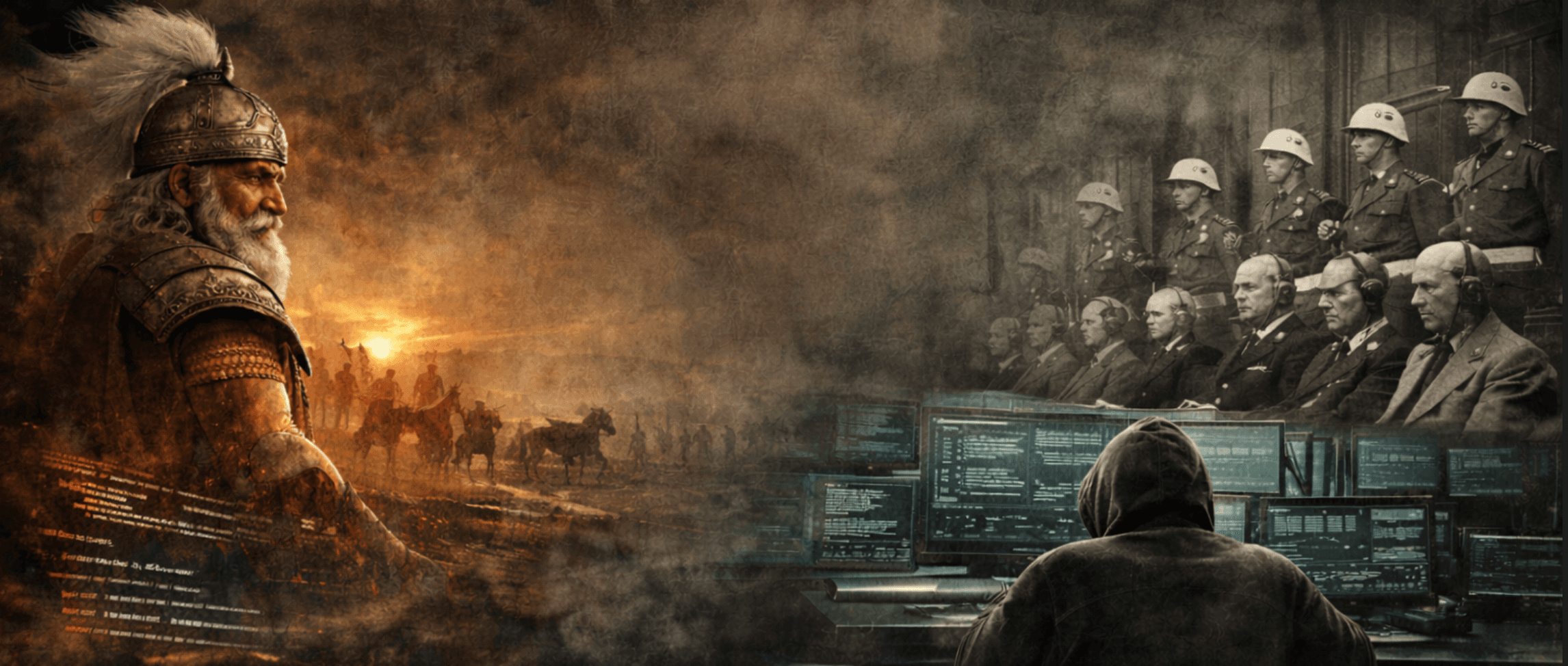

Bhishma is one of the most tragic figures in the Mahabharata—not because he lacked moral clarity, but because he had too much of it and still failed to act.

In the epic, Bhishma is not a villain or a bystander. He is the most respected elder of the kingdom, a master of law and ethics—the person everyone agrees understands dharma better than anyone else in the room.

He knew what was right.

He knew what was wrong.

And yet, when it mattered most, he stood still.

Bhishma’s justification was simple and devastatingly familiar: “I am bound by my duty.”

As a young man, he had taken an extraordinary vow—to renounce personal power and ambition forever, and to serve the throne unquestioningly, no matter who occupied it or how it was used.

That vow made him incorruptible.

It also made him immobile.

Duty to the vow he could not break.

Duty to the throne he would not abandon.

Duty to the system he had helped uphold, even as it decayed.

He was not evil.

He was obedient.

That distinction matters, because history has taught us—repeatedly—that some of the worst failures don’t come from malice. They come from people who stop thinking at precisely the moment when thinking is required.

The Nuremberg Defense Was Not About Monsters

After World War II, many of the accused at the Nuremberg Trials offered the same explanation: “I was only following orders.”

The world rejected that defense—not because it denied coercion or hierarchy, but because it denied moral agency.

The ruling was clear:

Obedience does not erase responsibility.

Systems do not commit crimes. People do.

What’s striking is that the Nuremberg defense wasn’t about cruelty—it was about abdication. About handing over judgment to authority and calling it duty.

If this feels uncomfortably close to Bhishma, it should.

AI Didn’t Create This Problem

AI didn’t invent moral abdication.

It just made it scalable.

We’re entering a phase where AI systems don’t just suggest—they act.

They open pull requests.

They recommend architectural changes.

They flag risks, approve workflows, and increasingly, execute decisions.

And with that comes a new, dangerously convenient sentence:

“The system decided.”

This is not a technical statement.

It’s a moral one.

AI doesn’t remove responsibility—it creates plausible deniability at machine speed.

What I’ve Seen in Practice

In real engineering teams, the story isn’t fear or resistance. It’s subtler.

First, people struggle just to use AI effectively.

Then quality issues appear—because AI will generate something even when standards are vague.

Only later does the hardest realization land: ownership never left the human.

AI-generated code doesn’t fail differently.

It fails faster.

What changes is not the nature of responsibility, but its concentration. A single decision, poorly reviewed, can now propagate across a codebase at a speed we’ve never had to manage before.

Interestingly, AI also shifts where effort lives. Typing becomes cheap. Thinking becomes expensive. Code reviews move away from syntax toward architecture, correctness, and long-term consequences.

AI doesn’t replace judgment.

It exposes how much we relied on muscle memory instead of thought.

The New Bhishma Risk

The modern Bhishma won’t stand on a battlefield.

He’ll sit behind dashboards.

He’ll approve pipelines.

He’ll trust systems that are “working as designed.”

And like Bhishma, he won’t be wrong because he lacked knowledge—

but because he treated faithful execution as righteousness, and stopped questioning when questioning was required.

History shows us that the hardest failures don’t come from bad intent.

They come from people faithfully executing systems without enough questioning built in.

Krishna’s Correction (Without the Theology)

Krishna’s message to Arjuna was not “do your duty blindly.”

It was: think, discern, and then act—without hiding behind outcomes.

Translated into modern systems thinking:

Delegation is not abdication

Automation is not absolution

Non-attachment does not mean non-responsibility

AI can assist judgment.

It cannot replace it.

The moment we let systems think for us instead of with us, we recreate the conditions that history has already judged harshly.

A Quiet Test

Here’s a simple question worth asking as AI becomes more autonomous:

If this decision causes harm, who would say “I own this”?

If the answer is vague, distributed, or points to “the system,” we already know how that story ends.

We’ve read it before.

In epics.

In court transcripts.

And now, quietly, in our codebases.

The real risk isn’t artificial intelligence.

It’s when humans stop thinking—and call it progress.